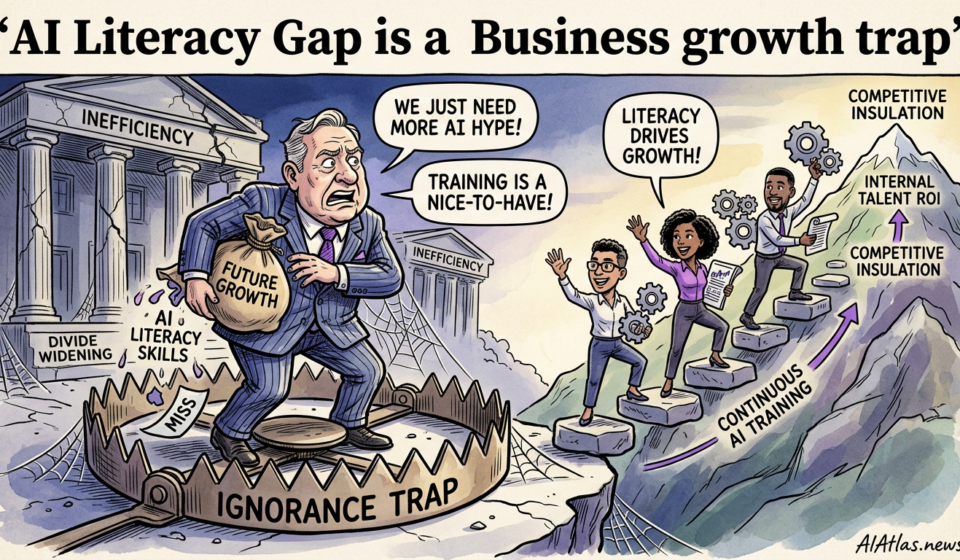

Why the AI Literacy Gap is a Business Growth Trap

The Strategic Objective

We read the March 2026 report on the escalating “AI literacy divide” as a wake-up call, not a trend to be observed from the sidelines. For CEOs, CTOs and founders the strategic objective is simple and non-negotiable: convert AI literacy from an optional skillset into an operational requirement that underpins product velocity, risk control and capital efficiency.

When frontline teams lack technical fluency — from product managers who can’t assess model behaviour to ops teams that cannot deploy safe inference — the result is predictable: slower delivery, higher vendor spend, fragile integrations and, ultimately, value leakage. Our aim is to show how disciplined internal education yields measurable ROI and creates a moat against competitors who outsource fluency instead of cultivating it.

Prerequisite Checklist

Before you buy licences or sign a partnership, we recommend a short, disciplined diagnostics phase. A binary “train everyone” approach wastes cash; targeted capability building aligned to business workflows does not.

Use the checklist below to determine readiness. Each item must be signed off by a named owner and a delivery timeline attached.

- Executive sponsorship and a clear education budget committed for 12–24 months.

- Skills baseline audit: role-by-role mapping of required fluency, assessed via practical tests not surveys.

- Curated curriculum paths: core technical literacy, model evaluation, prompt engineering, data hygiene and compliance awareness.

- Infrastructure for hands-on practice: sandboxed compute, labelled datasets, and CI pipelines for model tests.

- Governance guardrails: playbooks for testing, deployment gates, and incident response for model drift and hallucination.

Sequence of Operations (Steps 1–5)

Operationalising AI literacy is process work, not a one-off training day. We recommend a five-step rollout sequence that aligns learning investments to measurable business outcomes.

Each step should have a single accountable owner and an associated metric — for example, time-to-productive-use or number of validated experiments per quarter.

- Baseline and Prioritise. Run the skills audit, identify high-impact teams (product, customer success, data ops) and select two pilot use-cases that will deliver revenue or cost savings within 90 days.

- Design Practical Curriculum. Build modular pathways: short sprints of hands-on labs, code notebooks, and model-in-the-loop exercises. Avoid long theoretical courses that do not change behaviour.

- Run Bootcamp and Embed Mentors. Pair external experts for 6–8 weeks with internal mentors. Mentors are the multiplier — they translate concepts into the company’s tech stack and business logic.

- Operationalise Through Projects. Convert training into live experiments with guarded deployments. Use feature flags, canary releases and defined rollback criteria to keep production risk contained.

- Measure, Iterate, Scale. Capture outcomes, refine curriculum based on failure modes, and scale to adjacent teams. Allocate budget to sustain the programme rather than repeating expensive vendor-led introductions.

Common Failure Points

We see the same mistakes repeatedly. They’re expensive not only because they waste cash, but because they generate scepticism that extinguishes momentum for future programmes.

Below are the failure points we would tackle first when advising a client.

- Training without application: courses completed but no live projects — learning fades and confidence evaporates.

- One-off external hires used as a crutch: specialists deliver code but not capability; knowledge leaves when contracts end.

- Ignoring governance: models shipped without basic monitoring, exposing the firm to compliance and reputational risk.

- Using generic content: curricula that ignore your domain yield low transferability and delayed ROI.

- Underestimating change management: failing to align incentives, review cycles and promotion criteria to new skills.

Comparison Table: DIY vs Outsource

Deciding to build talent internally or buy expertise externally is not binary — but choices have predictable trade-offs. We compare the two approaches across five dimensions that matter to senior decision-makers.

Use this table to guide capital allocation and timeline assumptions for your talent strategy.

| Dimension | DIY (Internal Development) | Outsource (Consultant / Vendor) |

|---|---|---|

| Cost (12 months) | Moderate upfront; lower marginal long-term cost | High ongoing professional fees |

| Speed to Market | Slower initially; accelerates after 3–6 months | Fast immediate delivery; dependent on vendor availability |

| Knowledge Retention | High — skills remain inside the organisation | Low — knowledge often remains with the vendor |

| Control & Security | Greater control over IP and data handling | Less control; requires strict contractual terms |

| Long-term ROI | Higher, as teams compound skills and reuse learnings | Lower unless paired with an internal translation plan |

Visualised Workflow Roadmap

We favour a concise, visual roadmap to align stakeholders and set expectations. The element below is designed to be embedded in a roadmap page or an internal sprint plan.

Each stage corresponds to the sequence described earlier and should map to a 12–24 month plan with owners and KPIs attached.

Audit & Prioritise (0–4w)

Design & Materials (2–6w)

Hands-on & Mentoring (6–12w)

Pilot Projects (3–6m)

Rollout & Governance (6–24m)

Verification & Success Metrics

Measurement is the non-negotiable discipline that separates education theatre from business transformation. We insist on five metrics that are simple to track and directly tied to commercial outcomes.

Below is a comparison-bar visual showing expected relative impact across those metrics for a properly run internal literacy programme over 12 months.

72%

58%

46%

64%

38%

The Long-Term Maintenance Plan

Learning does not stop after the first wave of bootcamps. To protect your initial investment, convert mechanisms into sustained practice and budget for continuous refresh cycles aligned to business cadence.

Key elements of a maintenance plan include scheduled curriculum updates tied to model changes, internal certification levels for promotions, a rotational mentorship programme, and quarterly post-mortems that feed back into curriculum tweaks. Treat this as product development: roadmap features, prioritise by impact, and measure adoption.

- Certification: define levels and embed them into HR progression criteria.

- Content ops: a small team to refresh exercises and curate new case studies every quarter.

- Mentor rotation: internal mentors rotate across teams to prevent knowledge silos.

- Governance reviews: model performance and compliance checks at cyclical intervals.

- Budget line: recurring allocation for compute, data labelling and classroom time — not discretionary spend.

- FAQ 1

- How quickly will an internal programme show business results? Expect measurable outcomes from pilot projects in 3–6 months; organisation-wide ROI typically materialises within 12 months if projects are chosen for clear commercial impact.

- FAQ 2

- Can we combine outsourcing with internal development? Yes. Use vendors for rapid delivery while concurrently building internal mentors to absorb and extend vendor work; ensure knowledge-transfer clauses are contractually enforced.

- FAQ 3

- What is the minimum team size to start a literacy programme? You can begin with a small cross-functional cohort (6–12 people) focused on two high-impact pilots; scaling is modular once proof points exist.