The EU’s Statutory Licensing Tax: Why Your AI Model Costs Are About to Spike

The Strategic Objective

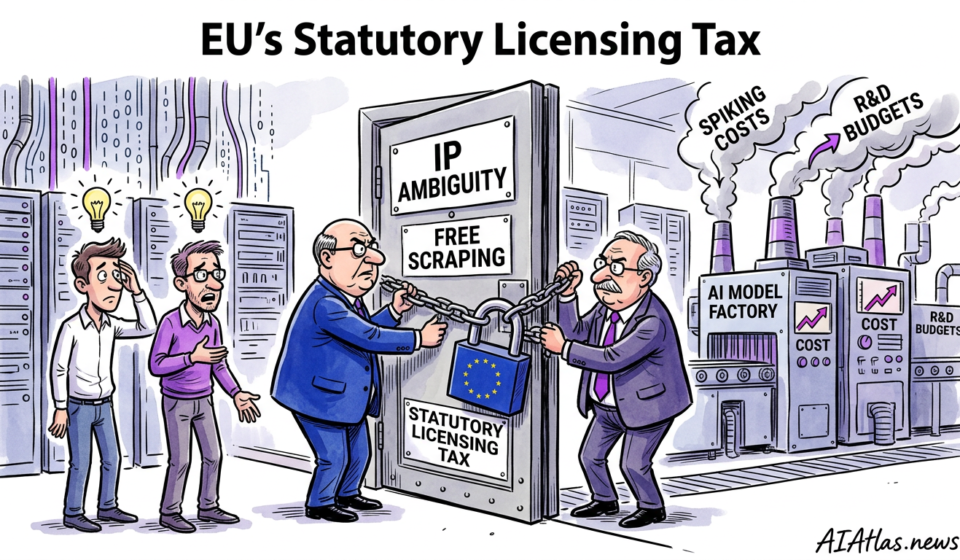

European Union lawmakers are currently closing the door on an era of intellectual property ambiguity. For years, founders have scraped the open web, operating under the highly tenuous assumption that training data constitutes fair use. That assumption is now structurally dead. The impending regulatory framework mandating payment to news publishers and rights holders for training corpuses represents a profound commercial threat. We view this not as a mere legal footnote or an abstract regulatory hurdle, but as a direct assault on the fundamental capital efficiency of model development.

In our experience across AI Atlas News, the immediate consequence of this mandate is the sudden introduction of standardised, legally enforced licensing costs. For venture capitalists evaluating burn rates, a new and volatile operational expenditure has materialised overnight. If you are training foundation models or fine-tuning enterprise architectures on proprietary texts without a clearance mechanism, your cost structure is vulnerable to catastrophic retroactive penalties. Our objective here is to distil this complex regulatory workflow into actionable operations, ensuring your enterprise avoids the cash-incinerating pitfalls that invariably accompany sudden legal compliance shifts.

Prerequisite Checklist

Before executing a defensive pivot in your data operations, your technical and legal teams must establish a rigorous baseline of operational reality. Rushing to alter model architectures without comprehensively auditing your existing liabilities is an exceedingly expensive mistake. What we are seeing in the broader market is that enterprise procurement leaders now demand granular proof of provenance; if you cannot definitively prove your data is clean, you simply cannot sell your software to corporate clients.

Consequently, establishing a robust operational foundation is entirely non-negotiable. You must assess both your current technical capabilities and your financial reserves allocated for legal contingencies. Founders who fail to prepare these prerequisites inevitably burn through their runway negotiating terms from a position of profound weakness, ultimately compromising their equity.

- Comprehensive data lineage tracking infrastructure, capable of isolating specific publisher inputs within existing training weights.

- Baseline operational expenditure models detailing current compute costs versus projected legal licensing fees per gigabyte of high-fidelity text.

- Retained intellectual property counsel with specific, documented expertise in European Union copyright directives and algorithmic disgorgement.

- Version control systems configured to rapidly roll back live models to pre-infringement states if a specific dataset is legally contested.

- Explicit clearance from your venture capital backers regarding the acceptable margin compression resulting from increased data acquisition costs.

Sequence of Operations

Transitioning your engineering infrastructure from a legally ambiguous state to a fully compliant, commercially defensible posture requires surgical precision. We have observed that haphazard compliance efforts often break functional models, introduce catastrophic bias, or stall deployment cycles indefinitely. The following sequence is engineered to preserve your technical momentum whilst systematically neutralising regulatory risk across your primary business units.

This operational sequence requires extremely tight coordination between your machine learning engineers, external legal advisors, and chief financial officers. Treat each phase as a strict gating mechanism; do not proceed to synthetic generation or architectural adjustments until the prior corpus audit is forensically complete and officially signed off by your legal counsel.

Comprehensive Corpus Audit

The initial operation demands a ruthless, forensic examination of every dataset ingested over the lifecycle of your models. You must categorise your training data into distinct risk tiers: public domain, permissive open-source, and proprietary publisher content. Engineers frequently resist this phase because it requires unearthing undocumented scripts and legacy data pipelines. However, failure to isolate the precise volume of copyrighted material you currently possess renders all subsequent financial projections entirely fictional.

Risk Segmentation and Truncation

Once the audit illuminates your legal exposure, you must aggressively excise high-risk datasets from your active training pipelines. This involves systematically deleting unlicenced news corpuses and publisher archives from your operational servers. We strongly advise implementing hard truncation protocols that explicitly block developers from pulling external text sources without documented legal approval. This quarantine prevents the ongoing contamination of your models and establishes a defensible perimeter.

Synthetic Data Substitution

To offset the performance degradation caused by excising high-quality human literature and journalism, you must immediately pivot to synthetic data generation. Deploy your clean, highly vetted models to generate task-specific training sets internally. Whilst synthetic pipelines require initial compute capital, they represent a fixed operational cost compared to the recurring, infinite rent-seeking of publisher licensing fees. We consistently see top-tier startups surviving legal shifts by mastering this synthetic substitution.

Commercial Licensing Negotiation

For the critical data domains where synthetic generation fails to capture necessary real-world nuance—such as real-time market analysis or complex editorial reasoning—you must secure direct commercial licenses. Approach publishers not as a distressed buyer, but as a strategic partner offering structured payment tiers. Establish strict data access protocols that ensure you pay only for the exact tokens ingested, strictly limiting unauthorised expenditure by rogue engineering units.

Continuous Compliance Monitoring

The final operational step is deploying automated compliance sentinels within your continuous integration and deployment pipelines. These software tools must scan all incoming training sets for copyrighted signatures or known publisher watermarks before ingestion. Treating compliance as a one-off project rather than an automated pipeline feature is a guaranteed route to future legal liabilities. You must build monitoring directly into the core engineering workflow.

Common Failure Points

The landscape of technological adaptation is heavily littered with ventures that correctly identified a threat but executed their strategic pivot disastrously. In our experience, founders routinely underestimate the sheer technical debt embedded in their legacy data pipelines. When forced to untangle unlicenced publisher data from their models, they discover that their engineers never built proper deletion mechanisms, leading to the devastating requirement of retraining entirely from scratch.

Another prevalent, cash-burning failure is the premature capitulation to aggressive licencing demands from massive media conglomerates. Enterprise technology leaders often panic upon receiving a cease-and-desist or observing the latest European Union directive, subsequently signing multi-year data contracts at exorbitant rates. This failure to explore alternative architectural optimisations or synthetic data equivalents destroys capital efficiency, permanently impairs gross margins, and swiftly renders the company entirely uninvestable.

Comparison Table: DIY vs Outsource

When confronted with the absolute necessity of acquiring legally cleared training material, executive teams face a stark strategic choice: build an internal governance apparatus to negotiate direct licenses and curate data, or entirely outsource procurement to third-party data aggregators who absorb the legal liabilities. Both avenues carry distinct commercial trade-offs that profoundly impact operational cash burn.

We synthesise the critical differences between these two methodologies below. As an investor or operator, your selection must directly align with your core competencies; if your distinct edge is purely algorithmic, internalising a sprawling legal procurement division is a catastrophic misallocation of your finite venture capital.

| Strategic Dimension | Internal Governance (DIY) | Outsourced Compliant Procurement |

|---|---|---|

| Cost Structure | High upfront legal and negotiation OPEX; lower long-term per-token fees. | Low initial OPEX; premium recurring subscription and usage margins. |

| Legal Liability | Retained internally; highly vulnerable to shifting case law and direct lawsuits. | Transferred to the external vendor via strict contractual indemnification clauses. |

| Speed to Market | Exceedingly slow; requires months of bilateral contract negotiations. | Highly rapid; immediate API access to pre-cleared, sanitised training corpuses. |

| Data Uniqueness | High; enables exclusive access to proprietary datasets unavailable to rivals. | Low; your competitors can purchase identical training blocks from the same vendor. |

| Engineering Distraction | Severe; forces core machine learning talent to manage ingestion compliance. | Minimal; allows core technical teams to focus purely on algorithmic architecture. |

Visualised Workflow Roadmap

To successfully navigate this new regulatory reality without destroying your balance sheet, you must rigorously categorise your data acquisition strategies based on their inherent risk and financial burden. The introduction of standardised licensing costs effectively punishes lazy engineering. By plotting your methodologies, you can clearly identify where to allocate resources and which practices must be immediately terminated.

The matrix below illustrates the trade-offs between implementation cost and legal risk exposure. As we have repeatedly advised our corporate partners, your definitive objective is to migrate all operations toward the bottom-right quadrant—accepting higher upfront implementation costs to structurally eliminate existential legal threats. Operating in the top-left quadrant is simply no longer a viable business model under European law.

Unfiltered Web Scraping

(High Risk, Low Cost)

Ignorant Aggregation

(High Risk, High Cost)

Synthetic Substitution

(Low Risk, Low Cost)

Direct Licensing Deals

(Low Risk, High Cost)

Verification and Success Metrics

Implementation without rigorous, unsentimental measurement is merely expensive guesswork. To ascertain whether your transition away from unlicenced corpuses is genuinely succeeding, you must establish stringent commercial metrics that report directly to the board of directors. We strongly caution against relying on vanity metrics, such as the sheer volume of legally cleared data acquired, which do not accurately reflect capital efficiency or algorithmic improvement.

Instead, focus your tracking heavily on the “cost per clean token” measured directly against the resulting model accuracy. If your expenditure on publisher licenses increases by forty percent but your model performance only marginally improves, your procurement strategy is fundamentally failing. Furthermore, you must continuously measure the “legal clearance latency”—the time required to approve and ingest a new dataset. A high latency indicates procedural friction that will eventually cripple your product iteration speed, allowing leaner competitors to outmanoeuvre you.

The Long-Term Maintenance Plan

The European Union framework regarding artificial intelligence and copyright is definitively not a static endpoint; rather, it is the volatile beginning of a prolonged era of judicial interpretation and regulatory refinement. Securing basic compliance today absolutely does not guarantee institutional immunity tomorrow. You must treat data governance as a permanent operational pillar rather than a temporary legal hurdle, budgeting for continuous legal updates and maintaining a flexible architecture capable of purging entire data domains upon remarkably short notice.

We firmly believe that the most commercially successful ventures over the next thirty-six months will be those that aggressively institutionalise their compliance infrastructure. This involves scheduling quarterly audits of all external data dependencies and refreshing your synthetic data pipelines to reflect shifting market demands. By embedding these defensive mechanisms directly into your core engineering culture, you transform a crippling regulatory burden into a formidable barrier to entry that your undercapitalised rivals simply cannot replicate.

Frequently Asked Questions

As this unprecedented regulatory shift fundamentally alters the economic viability of machine learning development, we continuously field urgent inquiries from concerned enterprise leaders and investors. The sheer volume of speculative misinformation circulating in the market requires sharp, definitive clarity. You cannot allow abstract legal ambiguity to paralyse your active engineering efforts while your competitors adapt.

Below, we systematically address the most pressing queries regarding the practical implementation of the new data compliance framework. We provide the exact, unvarnished perspectives we share confidentially with the founders and venture capitalists who are actively navigating this turbulent commercial transition.

- How does the EU framework practically impact open-source models trained on contested data?

- Even if your model weights are freely distributed, commercial entities using those weights could be subjected to severe licensing liabilities if the original training data contained unpaid publisher content. Enterprise procurement teams will inevitably demand strict indemnification, forcing creators to either provide absolute proof of legal clearance or face widespread commercial rejection from serious buyers.

- Can we rely entirely on synthetic data to completely bypass the need for publisher licenses?

- Whilst synthetic generation drastically reduces volume requirements and limits operational expenditure, relying entirely upon it can lead to model collapse and a severe degradation in output diversity. You will still require a statistically significant injection of human-authored, high-fidelity data—which must be legally licensed—to ground your models and prevent compounding algorithmic hallucinations.

- Should early-stage startups focus on compliance immediately, or build first and settle legalities later?

- The era of moving fast and ignoring intellectual property is definitively over, as venture capitalists now aggressively penalise unquantifiable legal risks during standard due diligence. You must integrate fundamental data tracking from day one; retroactively untangling contaminated datasets often costs far more capital than the startup is currently worth, ultimately resulting in forced liquidations.