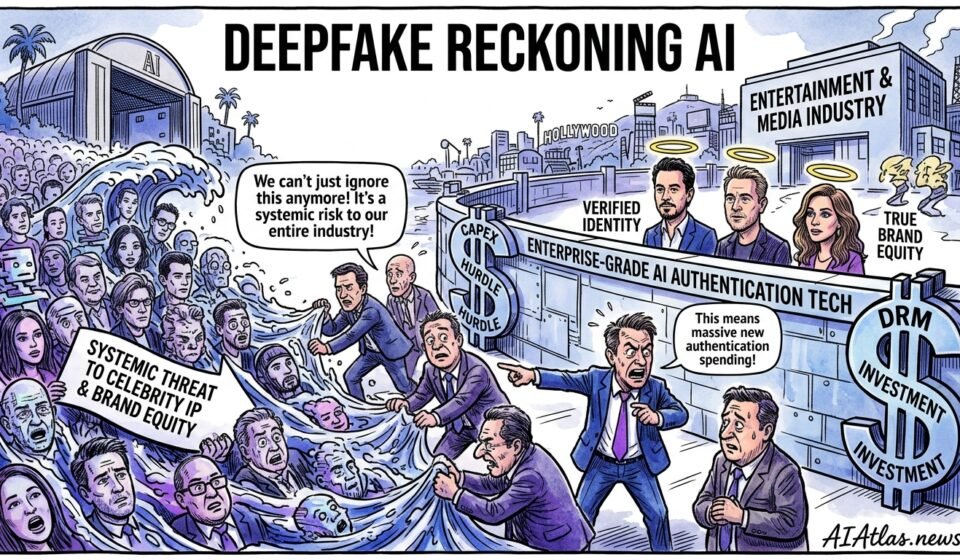

The Deepfake Reckoning: Why AI Verification Is Entertainment’s Newest Capex Hurdle

The Contrarian Thesis

We have watched the viral, debunked image of Tyler Perry and Marlo Hampton circulate and land like an economic grenade. Most commentary treated it as another internet misfire—an amusing footnote for PR teams to clean up. We disagree. This episode is symptomatic of a structural problem: synthetic narrative assets are no longer trivial noise. They actively erode the commercial exclusivity of high-value talent and, by extension, the monetisable scarcity that studios, brands and talent agencies sell.

When an image, audio clip or “leaked” statement can be generated and distributed in minutes, the traditional levers of reputation management and legal redress become reactive, expensive and often ineffective. For senior executives and investors, that ought to translate into a binary calculus: either you treat identity fraud as a perimeter security problem and invest accordingly, or you accept recurring value leakage from IP, endorsement fees and licensing arrangements.

Flaws in Current Market Assumptions

We see three persistent misreadings in the market. First, many assume media verification is a PR-economic issue: hire a crisis firm, issue denials, move on. That view underestimates cumulative brand depreciation. Second, the dominant commercial belief that consumer-facing verification tools are sufficient ignores enterprise risk: a viral asset that harms a celebrity’s credibility can damage multi-million pound deals before a fact-checker flags it.

Third, investors and buyers often assume that holdouts—copyright claims, takedowns and DMCA notices—are effective deterrents. In practice, takedowns are slow, jurisdictionally constrained and easily circumvented. The net effect is a market that consistently undervalues deterministic authentication, over-indexes on remediation, and under-invests in prevention at scale.

The Structural Shift

We are observing a structural shift from intermittent PR shocks to continual identity erosion. Synthetic assets now function as tradeable, attackable vectors in negotiations—used to pressure agencies, distort contract negotiations, or hijack endorsement windows. The marginal cost of producing convincing fakes has collapsed while distribution velocity has increased thanks to short-form platforms and closed messaging apps.

That changes the business model of celebrity IP. Where scarcity once came from controlled appearances and gated releases, scarcity is now an enforceable attribute that enterprises must protect proactively. The practical implication is a movement from reactive cost lines (PR clean-up, legal fees) to predictable, preventive spending on verification infrastructure and DRM integration.

Decision Framework for Capital Allocation

We recommend using a three-tier capital allocation framework: Protect, Verify, and Monetise. Protect funding should be directed at access control, watermarking at source, and contractual clauses that mandate provenance metadata. Verify investments buy enterprise-grade authentication systems that operate in real time. Monetise capital targets technology that converts provenance into revenue—licensed authenticity stamps, premium verified content feeds, and attribution APIs tied to royalty flows.

The allocation mix should depend on exposure. Studios and streaming platforms, which sell exclusivity, should weight Protect and Verify at a 60/30 split with 10% for Monetise. Talent managers and smaller agencies should consider a 40/40/20 split—less infrastructure-heavy Protect spend but more emphasis on verification partnerships and monetisation pathways that compensate for unavoidable leakage.

Risk Assessment Table

Below we outline the most economically salient attack vectors, the operational impact and a high-level mitigation posture. This is not exhaustive but captures recurring patterns we see across deals and disputes.

| Threat Vector | Likelihood (1–5) | Impact on Brand | Primary Mitigation | Estimated Annual Cost (£) |

|---|---|---|---|---|

| Deepfake video | 5 | High — contractual and reputational | Real-time provenance verification + forensic watermarking | 250,000–1,000,000 |

| Fabricated image (viral memes) | 5 | Medium — social credibility erosion | Cryptographic signing at asset origin | 50,000–300,000 |

| Synthetic audio (fake endorsements) | 4 | High — commercial contracts at risk | Audio fingerprinting + contractual verification windows | 150,000–600,000 |

| Cloned social accounts | 4 | Medium — direct-to-fan scams | Platform partnerships and verified identity APIs | 30,000–200,000 |

| Doctored press releases | 3 | Low–Medium — market confusion | Authenticated distribution channels + signed PDFs | 20,000–100,000 |

These figures are illustrative: the point is not a precise budget but the relative economics. Prevention buys predictability; remediation buys uncertainty.

Visualised Impact Matrix

To help prioritise, we map likelihood against economic impact. Enterprises should prioritise actions that fall in the high-likelihood, high-impact quadrant.

Deepfake video — Impact: Very High, Likelihood: Very High

Synthetic audio — Impact: High, Likelihood: High

Doctored press release — Impact: Low, Likelihood: Low

Cloned accounts — Impact: Medium, Likelihood: High

Fabricated images — Impact: Medium, Likelihood: Very High

The visual clarifies trade-offs. Not every high-likelihood asset demands equal spend; prioritisation should follow contractual exposure and velocity of spread.

Strategic Recommendations for Leaders

We advise executives to institutionalise authenticity the same way they do IP contracts: treat it as a core asset class with governance, budgets and measurable KPIs. Immediate actions: mandate signed provenance metadata for all distributed content, negotiate platform-level verification agreements for priority talent, and integrate forensic detection into ingest pipelines for anything that might be monetised.

For investors, the opportunity is twofold. First, back companies that provide deterministic provenance at scale—cryptographic signing, tamper-evident chains and federated attestation. Second, invest in business models that monetise verified content: premium feeds for advertisers, authenticated archives for studios, and subscription verification services for talent management firms. The returns will come from converting risk avoidance into a predictable, recurring revenue stream.

Future-Proofing the Business Model

We expect three developments over the next 24 months: standardised provenance metadata across major platforms, tighter contractual obligations around source signing, and higher premiums for authenticated content. Leaders should prepare to buy into standards or risk being priced out of distribution windows where authenticity becomes a differentiator.

Implementing these changes requires a mix of engineering, legal and commercial effort. That means hiring product owners who understand cryptography and media workflows, pushing for contractual clauses that require provenance, and reallocating a slice of marketing budgets to authenticity insurance. The alternative is incremental erosion of brand equity that compounds over deals.

- FAQ 1

- What immediate steps should a talent manager take after a synthetic asset goes viral? Issue a clear provenance statement, engage forensic verification teams, and partner with platform trust-and-safety contacts to limit distribution while documenting the incident for legal action.

- FAQ 2

- Can current DRM systems stop synthetic identity fraud? Traditional DRM addresses copying and distribution but not falsified identity. You need provenance, cryptographic signing and real-time verification layered on top of DRM to address identity-specific threats.

- FAQ 3

- Where should investors look for defensible startups? Look for firms combining cryptographic provenance, low-latency verification APIs and deep platform integrations. Network effects—platform partnerships and enterprise contracts—will create durable moats.