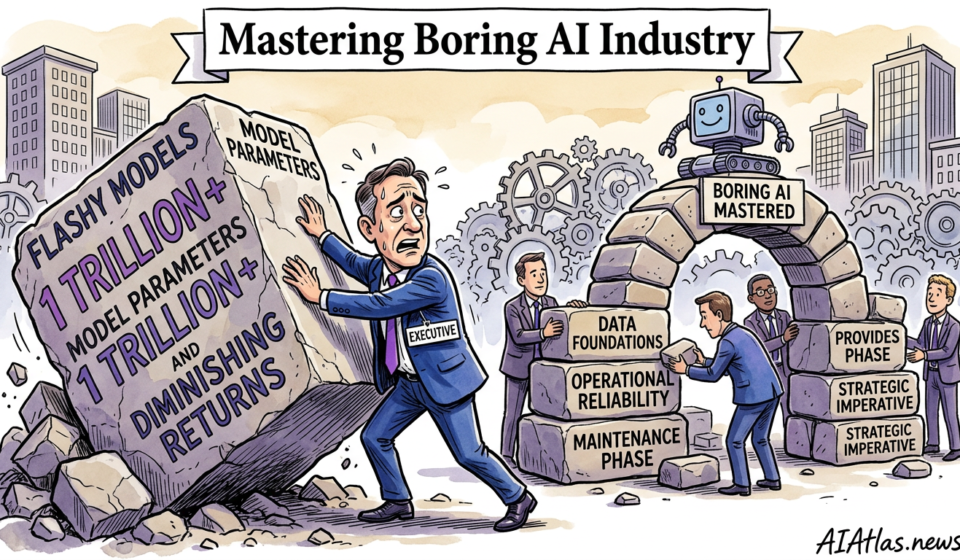

Mastering ‘Boring AI’: Why Industry Leaders are Pivoting to Data Foundations Over Flashy Models

The Strategic Imperative

The industry’s fixation on raw model parameters has reached a point of severe diminishing returns. At AI Atlas News, we are continually pitched by startup founders and enterprise CTOs who firmly believe their competitive moat lies in adopting the largest, most parameter-heavy multimodal architectures available. In our experience, this is a profound miscalculation that leads directly to capital incineration. The commercial reality we observe in mid-to-large enterprise deployments is starkly different: underlying mathematical models commoditise rapidly, whilst proprietary, immaculately structured data compounds in tangible enterprise value.

Our objective is to strip away the vanity metrics and refocus your engineering capital on what actually generates defensible business value: uncompromising data hygiene. The flashy promise of autonomous multimodal agents frequently obscures the unglamorous, highly repetitive reality of data cleansing, rigorous versioning, and structured governance. When we audit post-deployment failures, we rarely find a business that collapsed because their foundational architecture lacked sufficient reasoning depth. They fail because they inject disorganised, untracked, and fundamentally contaminated data into their production environments, resulting in erratic outputs, recursive hallucination loops, and wholly unsustainable operational costs. You must stop optimising the engine while pouring contaminated fuel into the tank.

Prerequisite Requirements

Before authorising your engineering teams to write a single line of code to query an external API or train a local architecture, technical leaders must establish a stringent baseline of operational readiness. We see too many venture-backed teams rushing into deployment without a coherent, documented map of their own enterprise data topography. You must know precisely where your proprietary information resides, how it flows between legacy systems, and which departments hold the absolute access rights to modify it.

This demands the cultivation of an engineering culture that treats data pipelines as primary commercial assets, equal in importance to the final user interface. Your prerequisite checklist must include a unified data catalogue, explicit role-based access controls, and a deterministic versioning system for your internal datasets. Without these foundational elements locked in place, any subsequent attempt to scale an intelligent system will simply amplify your existing technical debt, burning through your financial runway with alarming speed as engineers spend their sprints debugging unlabelled data rather than shipping product features.

Sequence of Operations

Transitioning from theoretical capability to a robust, revenue-generating product requires a militaristic approach to technical implementation. We have synthesised the following operational sequence based on the post-mortems of dozens of failed enterprise teams and the triumphs of a select few. The focus here is entirely on establishing long-term defensibility through methodical, unyielding data engineering.

Adhering strictly to this sequence ensures that when the underlying algorithmic architecture inevitably shifts—whether due to aggressive vendor pricing changes or sudden open-source advancements—your core business remains securely insulated. The true commercial value sits within your structured pipeline, not the interchangeable algorithmic layer positioned at the end of it.

Foundational Data Auditing

You cannot sanitise what you have not identified. The first operational phase requires deploying automated auditing scripts across your entire storage architecture to categorise information by structure, sensitivity, and operational relevance. We strongly advise mapping the exact provenance of every dataset. If your engineers cannot trace a piece of information back to its original source, it must be quarantined immediately. Ingesting untraceable information into a production environment is the fastest route to compliance violations and systemic logic failures.

Automated Cleansing Pipelines

Once the audit is complete, teams must construct automated pipelines designed to scrub, format, and standardise inputs before they ever reach an active model. This is where the majority of your early-stage capital should be deployed. We recommend implementing aggressive regular expression filters, anomaly detection scripts, and normalisation protocols to strip out duplicated entries and malformed syntax. Relying on manual intervention at this stage is a guaranteed failure point; the cleansing process must run autonomously and scale elastically with your user growth.

Deterministic Data Versioning

Operating a production environment without deterministic data versioning is an act of commercial negligence. Just as software developers use Git to track codebase changes, your data engineers must employ tools like DVC to take immutable snapshots of your datasets at specific points in time. When a deployed model suddenly begins generating toxic or inaccurate outputs, your team must possess the capability to roll back the ingested data to the last known stable state. Without this versioning, debugging an erratic system becomes a costly exercise in forensic guesswork.

Structured Governance Implementation

Governance is not merely a legal hurdle; it is a structural necessity for maintaining output quality. You must encode your compliance requirements directly into your infrastructure pipeline. This means establishing automated policy checks that block personally identifiable information or proprietary trade secrets from being passed into third-party inference APIs. We see companies facing catastrophic fines and reputational ruin because they allowed eager developers to bypass governance checks in the name of deployment velocity.

Staged Deployment and Feedback Integration

Finally, the transition to production must be staged incrementally. Never expose a new architecture to your entire user base simultaneously. Implement strict shadow deployments where the system processes live inputs without returning outputs to the end user, allowing your team to monitor performance against baseline metrics. Establish a closed-loop feedback mechanism where erroneous outputs flagged by users are automatically routed back to the data engineering team, highlighting precisely where the cleansing pipeline requires immediate refinement.

Common Failure Points

We continually observe a profound misalignment between where technical teams allocate their budget and where operational disasters actually originate. The industry-wide obsession with fine-tuning massive architectures severely distracts from the systemic rot accumulating in poorly maintained infrastructure pipelines. When we conduct autopsies on failed enterprise initiatives, the root causes are entirely predictable and deeply mundane.

The visualisation below illustrates the primary culprits behind catastrophic cash burn in post-deployment environments. Notice how the vast majority of financial leakage stems from poor data hygiene and governance deficits, rather than algorithmic limitations. Teams that misallocate their capital toward premium compute resources whilst starving their core engineering functions inevitably face systemic operational collapse.

Infrastructure Sourcing Trade-offs

As you map out your architecture, a critical strategic decision emerges: whether to build these unglamorous pipelines entirely in-house or outsource them to specialised infrastructure vendors. We find that startup founders frequently underestimate the severe long-term maintenance burden of operating bespoke internal tools, particularly when dealing with complex, real-time data ingestion across multiple geographic zones.

Conversely, outsourcing your core data hygiene to third parties can create dangerous vendor lock-in, actively eroding the proprietary moat you are striving to build. The decision requires a brutal assessment of your engineering capacity. The following comparison matrix details the strategic trade-offs across five critical dimensions, allowing you to align your infrastructure decisions with your specific runway constraints and technical capabilities.

| Strategic Dimension | In-House Infrastructure | Outsourced Vendor Solutions |

|---|---|---|

| Capital Expenditure | High upfront engineering costs; significant payroll allocation required for initial build. | Lower initial outlay; predictable SaaS pricing that amortises cleanly over the first year. |

| Deployment Velocity | Painfully slow to launch; requires months of iterative testing and internal QA. | Highly accelerated; pre-configured pipelines allow teams to ship within weeks. |

| Long-Term Defensibility | Extremely high; you retain total control over proprietary sorting logic and architecture. | Moderate to low; your pipeline mechanics are ultimately commoditised and shared with competitors. |

| Maintenance Burden | Severe ongoing drain on engineering resources; requires dedicated DataOps teams to manage drift. | Minimal internal effort; the vendor bears the operational cost of uptime and bug resolution. |

| Security & Governance | Absolute oversight over isolated data lakes; highly suitable for stringent regulatory environments. | Relies entirely on vendor compliance certificates; introduces third-party access risks to your audit trail. |

Workflow Roadmap

To practically implement these rigorous standards, your engineering teams require a clear, visualised pathway that dictates the critical path from raw, unstructured inputs to a sanitised, production-ready state. We have designed this visual roadmap to highlight the necessary operational gates and validation checks that must be successfully passed before any piece of information is exposed to an active algorithmic model.

This roadmap serves as an architectural blueprint rather than a vendor tutorial. It is intentionally devoid of specific software tooling, focusing instead on the underlying logic flow that ensures operational stability and cost efficiency. Embed this sequential logic directly into your engineering sprints to maintain uncompromising standards across all technical departments.

Raw Ingestion & Triage

Ingest unstructured enterprise data from legacy silos. Apply immediate metadata tags to establish provenance before moving to the staging area.

Automated Scrubbing Protocols

Execute regular expression filters and normalisation scripts. Strip out malformed syntax, duplicate files, and irrelevant noise to preserve compute efficiency.

Governance & PII Quarantine

Run strict compliance checks. Identify and redact personally identifiable information. Any flagged items are quarantined for manual security review.

Version Snapshots & Inference

Lock the sanitised dataset with a cryptographic hash. Deploy the immaculately clean data into the active reasoning model, logging all inputs and outputs.

Verification and Success Metrics

You cannot effectively manage what you do not rigorously measure, yet we frequently find enterprise technical teams operating without a coherent framework for evaluating pipeline health post-deployment. Success in this pragmatic context is rarely about standard algorithmic benchmark tests or boasting about total parameter counts; it is fundamentally about operational reliability, risk mitigation, and financial efficiency. If your team cannot confidently state your daily cost-per-query, your architecture is out of control.

We advise CTOs to implement strict telemetry across the entirety of the data lifecycle. Key operational metrics must actively monitor data contamination rates, pipeline latency fluctuations, and the mean time to recovery when a malformed dataset inevitably slips through the security net. By ruthlessly tracking these unglamorous indicators, you proactively protect your working capital, ensure your architecture remains robust under severe commercial pressure, and maintain the trust of your enterprise clients.

The Long-Term Maintenance Plan

Initial deployment is not the finish line; it is merely the starting point of an ongoing, highly resource-intensive maintenance cycle. As consumer behaviours shift, market semantics drift, and commercial environments continuously evolve, your data pipelines will naturally degrade unless they are subjected to rigorous, continuous oversight. We urge founders to allocate a minimum of forty percent of their annual engineering budget exclusively to post-deployment maintenance, drift monitoring, and routine data hygiene.

A truly defensible business model relies entirely on the compounding value of a meticulously maintained proprietary dataset. You must establish automated alert systems for semantic data drift, schedule uncompromising quarterly audits of your internal access controls, and enforce strict deprecation policies for outdated data versions to prevent storage bloat. This disciplined, long-term approach to systems architecture is the ultimate differentiator between teams that rapidly burn cash on transient hype and those that build enduring, highly profitable enterprise value.

Frequently Asked Questions

- How do we quantify the return on investment for data engineering rather than model fine-tuning?

- We recommend tracking the reduction in mean time to recovery when pipeline errors occur, alongside the tangible decrease in API inference costs. By feeding sanitised, highly relevant data into a smaller context window, enterprises routinely witness a dramatic drop in operational overhead. The return on investment is fundamentally realised through protected profit margins and reduced compute expenditure rather than immediate top-line revenue.

- At what stage of growth should a startup transition from outsourced pipelines to in-house infrastructure?

- The transition should occur when your monthly vendor costs eclipse the combined salary of two senior data engineers, or when regulatory compliance demands absolute isolation of your data lakes. Startups should leverage fast vendor tools to find product-market fit, but must inevitably internalise their architecture to build a lasting competitive moat. Delaying this transition typically results in crippling vendor lock-in that degrades your overall enterprise valuation.

- What is the most common reason for data governance failures in mid-sized enterprises?

- Governance typically fails because compliance checks are treated as an afterthought executed by legal teams rather than being coded directly into the engineering pipeline. When developers are incentivised purely on deployment speed, they will invariably bypass manual security protocols to hit their sprint targets. To resolve this, technical leaders must ensure that no data can physically enter the inference stage without passing automated privacy redaction filters.