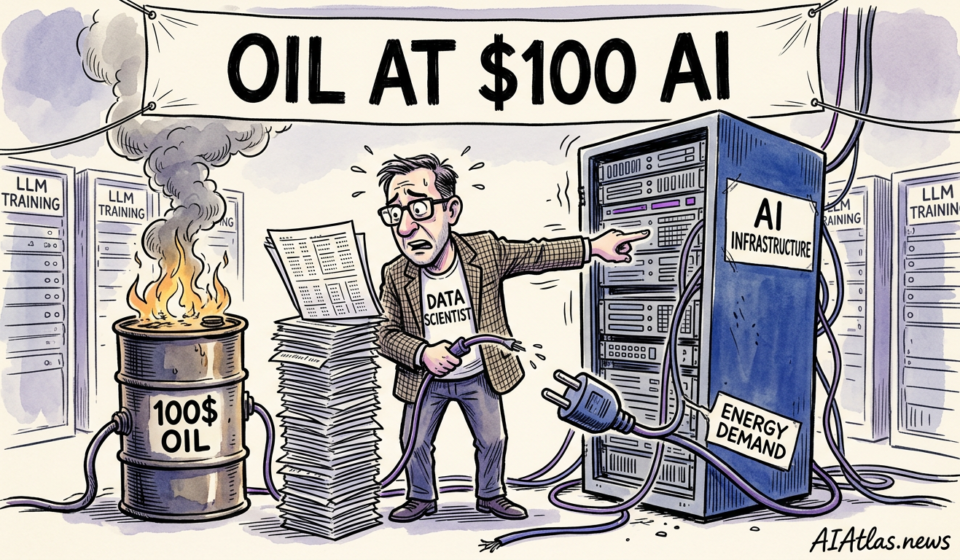

Oil at $100: Why Your AI Infrastructure Strategy Must Pivot Now

The Headline Truth

In our experience covering the intersection of global macros and technology, few events expose the fragility of Silicon Valley quite like a supply chain shock in the Middle East. Military operations have effectively choked shipping lanes through the Strait of Hormuz, sending global crude oil prices surging past the $100 per barrel mark. While mainstream financial press focuses on consumer petrol prices and inflation indices, we are watching a far more severe crisis unfold in the server farms that house massive artificial intelligence compute clusters. The underlying economics of machine learning have just been drastically rewritten by geopolitical volatility.

Electricity is the lifeblood of large-scale infrastructure, and marginal power pricing is inextricably linked to global energy markets. As crude spikes, so do the costs of natural gas and the broader utility grid, directly eroding the margins of facilities demanding hundreds of megawatts to train frontier models. What we are seeing is an immediate, ruthless stress test for enterprise CTOs and startup founders who previously treated compute as an easily scalable, purely technical resource rather than an energy-dependent commodity.

Context Others Missed

The prevailing narrative over the past two years has celebrated the rapid deployment of massive GPU clusters as a pure indicator of commercial ambition. Investors rewarded teams for hoarding chips, viewing hardware acquisition as the ultimate moat. However, our analysis reveals a critical oversight in this thesis: the assumption of cheap, uninterrupted utility baseloads. While capital expenditure was heavily scrutinised, the operational expenditure required to cool and run tens of thousands of processors continuously was largely modelled on historical, stable energy tariffs. That assumption has completely collapsed.

Grid stability and energy spot pricing dictate the true cost-efficiency of data centres. With crude breaking $100, facilities tied to fossil-fuel-heavy regional grids are seeing their power purchase agreements either renegotiated or subject to extreme peak-pricing penalties. Founders who meticulously optimised their token-generation rates and inference latency are suddenly discovering that a macroeconomic shock thousands of miles away can instantly render their commercial models deeply unprofitable. The conversation must immediately shift from sheer parameter scale to absolute energy efficiency.

The Commercial Ripple Effect

This massive spike in compute operational costs is already forcing a radical restructuring of commercial priorities across the sector. We are observing enterprise CTOs quietly halting massive, monolithic training runs in favour of smaller, domain-specific models. The sudden cost-inefficiency of power-hungry data centres means that building a general-purpose model from scratch is no longer a viable venture-backed strategy unless supported by sovereign wealth or hyperscaler balance sheets. Companies without deeply embedded cost-recovery mechanisms will bleed cash at an unprecedented rate.

For startup founders, the ripple effect translates to immediate margin compression. Inference costs—the ongoing expense of serving a query to a user—are creeping up, forcing businesses to choose between absorbing the loss to maintain user growth or passing the hike to consumers and risking churn. Infrastructure providers, who previously offered highly subsidised cloud credits to attract early-stage clients, are now pulling back. The era of cheap, heavily abstracted compute has ended, replaced by an environment where energy economics dictate product viability.

Stakeholder Impact Analysis

Investors allocating capital to infrastructure funds must urgently recalibrate their risk models. In our view, the most exposed portfolios are those heavily weighted towards tier-two cloud providers lacking long-term, fixed-price energy contracts or captive renewable power sources. Hyperscalers like Microsoft, Google, and Amazon possess the financial gravity to absorb temporary margin hits, but they will inevitably adjust their pricing tiers to protect their primary cloud revenues. This passes the financial burden directly downstream to the application layer.

For enterprise leaders, this geopolitical shock mandates a shift toward rigorous cost orchestration. Hardware investors are rapidly pivoting their interest toward custom silicon designed explicitly for lower thermal design power (TDP) rather than just peak floating-point operations. The stakeholders who will successfully navigate this storm are those who can immediately deploy intelligent workload routing—shifting compute tasks dynamically across global regions where the sun is shining, the wind is blowing, or energy tariffs remain momentarily suppressed.

Strategic Comparison Table

To fully grasp the severity of this shift, we must contrast the operational metrics before the Strait of Hormuz disruption with the current reality. The metrics below highlight how the sudden energy premium alters the foundational economics of machine learning deployments.

| Operational Metric | Pre-Crisis Baseline (Crude <$80) | Current Reality (Crude >$100) |

|---|---|---|

| Wholesale Grid Electricity | $0.06 – $0.08 per kWh | $0.12 – $0.18 per kWh |

| 10k GPU Training Run (30 Days) | ~$1.2M OPEX | ~$2.5M OPEX |

| Application Layer Gross Margin | 60% – 75% | 35% – 45% |

| Preferred Model Architecture | Monolithic Large Models | Small Languge Models (SLMs) |

| Infrastructure Investment Focus | Raw Compute Aggregation | Cooling & Energy Efficiency |

The data paints a stark picture of margin erosion. As the cost per kilowatt-hour doubles in specific markets, the viability of ambitious, long-duration training runs requires an immediate audit. Startups operating on thin capital runways must adjust their product roadmaps to survive the sudden doubling of core operational expenditures.

Visualised Market Response

Our analysis of spot market cloud pricing reveals a dramatic divergence between energy-independent facilities and those exposed to the fossil fuel premium. The comparison below illustrates the estimated monthly power costs for operating a standard 1,000-GPU cluster across different grid dependencies following the crude oil spike.

The visual evidence clearly demonstrates why reliance on standard commercial utility contracts is now a massive operational liability. Facilities dependent on natural gas or coal, intrinsically tied to the crude index, are experiencing punitive monthly overheads. Consequently, data centre operators situated near captive hydro, nuclear, or advanced geothermal resources are sitting on highly lucrative geographical advantages.

Critical Market Risks

We anticipate a wave of silent bankruptcies over the next two quarters. The most acute risk lies with mid-tier infrastructure startups that signed fixed-price contracts with downstream clients while retaining variable exposure to utility providers. Caught in a structural squeeze, these entities cannot pass on the geopolitical energy premium fast enough to prevent insolvency. The ripple effect will strand their clients, forcing a chaotic migration of training workloads to more stable, albeit more expensive, sovereign cloud environments.

Furthermore, this dynamic fundamentally threatens the open-source movement. Producing frontier open-weight models relies heavily on benevolent compute donations or heavily subsidised academic clusters. As universities and non-profits face soaring campus electricity bills, the sheer generosity required to sponsor massive computational philanthropy will evaporate. The concentration of capability will inevitably retreat back behind the walled gardens of the few mega-corporations that possess dedicated energy portfolios.

Conclusion and Future Outlook

The disruption in the Strait of Hormuz is a harsh awakening for a sector that has operated under the illusion of infinite, cheap operational capacity. Moving forward, the true differentiator for technology businesses will not be raw parameter count, but energy-normalised intelligence. We advise commercial leaders to immediately audit their cloud supply chains, enforce stringent efficiency standards on their engineering teams, and prepare for a sustained period where electricity, not silicon, dictates the pace of innovation.

This macro-economic convergence proves that software does not exist in a vacuum. The physical realities of global trade flows, military standoffs, and commodity pricing are deeply embedded in every query generated by a neural network. Adaptability, brutal resource efficiency, and strategic energy partnerships will define the next generation of dominant infrastructure players.

Frequently Asked Questions

- How should early-stage startups adjust their compute strategy?

- Founders should immediately pivot from large-scale model training towards fine-tuning smaller, task-specific architectures. Securing longer-term compute pricing agreements that cap energy-related surcharges is vital to extending financial runways.

- Will hyperscalers absorb these energy costs for their clients?

- In our experience, hyperscalers will protect their core margins by eventually raising spot pricing or implementing variable energy surcharges. They may offer temporary grace periods, but the end-user will ultimately bear the structural increase in overheads.

- Does this scenario accelerate the transition to sustainable energy in tech?

- Absolutely. The geopolitical volatility surrounding crude oil acts as a powerful financial catalyst for data centre operators to invest directly in captive nuclear, geothermal, and solar infrastructure to guarantee operational stability.