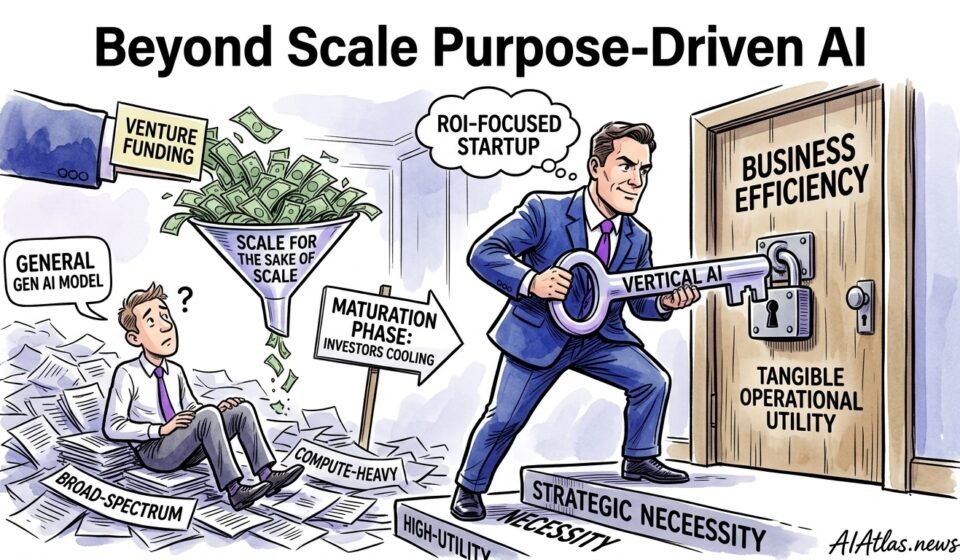

Beyond Scale: Why Purpose-Driven AI Is the Next Commercial Frontier

The Strategic Objective

We believe the immediate commercial imperative for ROI-focused startups is not to chase ever-larger, generalized generative models but to build narrow, domain-specific systems that produce measurable business outcomes with modest capital and energy spend. Karen Hao’s call at MIT to pause the escalation of massive-scale pretraining isn’t an academic quibble; it maps directly to what we are seeing in boardrooms and cap-tables: investors reward predictable revenue and defensible margins, not model size in petaflops.

Our objective is straightforward: use small-to-medium models and careful domain engineering to deliver higher utility per pound spent. That means selecting problems where the data is proprietary or hard to replicate, where the output drives a transactional uplift (fewer errors, faster decisions, improved throughput), and where the route to commercialisation is clearly measurable.

100

60

20

8

5

Prerequisite Checklist

Before committing capital to model development, we require a hard proof that the target problem yields direct commercial value. That means measurable KPIs: conversion lift, error reduction, time-to-decision, or cost avoidance. Vague promises about “better UX” are insufficient; your KPIs must map to revenue or margin within 12 months.

We also insist on three technical prerequisites: clean labelled data or a realistic plan to collect it; a compact model architecture that is trainable within your budget envelope; and a deployment pathway that satisfies latency, privacy and regulatory constraints. Absent those, you’re funding research, not a product.

Sequence of Operations (Steps 1-5)

We recommend a disciplined five-step cadence that compresses risk and clarifies go/no-go milestones. Each step is built to produce a binary decision for the next funding tranche.

Below we outline the sequence we use when advising founders or running pilots.

- Define outcome and metric. Translate business impact into a single north-star metric and an acceptance threshold (e.g., 12% reduction in lab processing time or £X/month per customer uplift).

- Data audit and minimal dataset build. Collect a small, high-quality dataset (3k–50k examples depending on task) and run labelling rounds until inter-annotator agreement is stable.

- Model selection and cost estimate. Choose the smallest architecture that meets the metric in validation; simulate inference cost and end-to-end latency to forecast operating expense.

- Pilot with real users. Deploy in a controlled slice of customers or operations, instrument tightly, and measure against the north-star over a defined test window.

- Scale or kill decision. If the pilot clears the acceptance thresholds and unit economics hold, plan staged rollout with automation for monitoring and retraining; otherwise rework scope or terminate.

Common Failure Points

Entrepreneurs repeatedly squander runway on three avoidable mistakes. First, they attempt broad applicability before proving the narrow path to value; the result is a bloated model that satisfies no customer completely. Second, they underinvest in data quality and overinvest in model size; cleaned, curated data almost always beats more parameters.

Third, teams neglect operational readiness—monitoring, data drift detection, and incident response—assuming “we’ll fix it later”. That deferred work multiplies support costs and erodes trust. If you cannot staff the ops work or budget it into TTM, your launch will stall.

Comparison Table: DIY vs Outsource

Below is a pragmatic comparison we use when advising founders on build-versus-buy decisions. Every row is a trade-off: choose based on time-to-market, control required, and your ability to iterate on domain data.

| Dimension | DIY | Outsource |

|---|---|---|

| Cost (initial) | Lower tooling fees, higher engineering salaries; predictable spend if team exists. | Higher up-front vendor fees; can be cheaper for short pilots without hiring. |

| Time-to-market | Slower unless experienced hires already in place; iteration cycles controlled internally. | Faster launch with vendor templates; dependent on vendor availability and SLAs. |

| Control & IP | Full IP ownership and model behaviour transparency; easier to lock in unique advantages. | Less control; potential vendor lock-in and limitations on model internals. |

| Domain expertise | Requires hiring or training domain ML engineers; deeper long-term capability if successful. | Immediate access to specialist teams but knowledge may not transfer to your staff. |

| Operational burden | Higher: you must run infra, monitoring, and compliance; recurring engineering cost. | Lower short-term ops; higher long-term recurring vendor costs and dependency. |

Use DIY when domain IP and long-term margin require ownership; use outsource for constrained runways, rapid market tests, or when you lack the hires to build reliably.

Visualised Workflow Roadmap

We prefer a simple visual to align product, data and engineering teams on milestones. This roadmap is minimal: it emphasises early customer feedback loops and a measured scale-up.

Paste this into your investor one-pager and it will crystallise budget asks and timing assumptions.

→

Minimal Dataset

→

Pilot Model

→

User Trial

→

Scale & Ops

Verification & Success Metrics

Verification is where commercial projects live or die. We use three metric pillars: outcome delta (did the metric move by the acceptance threshold?), unit economics (LTV:CAC or £/user improvement), and operational stability (MTTR, false positive rates, drift alerts). Each must be tracked in living dashboards and tied to automated alerts.

We expect a successful pilot to demonstrate a statistically significant outcome delta within the prescribed test window and to show that per-unit inference cost preserves margin at scale. If either of those fails, the right answer is often to tighten scope, not increase model size.

The Long-Term Maintenance Plan

Maintaining a vertical model is not a “set-and-forget” exercise. We budget two ongoing roles at minimum: a data engineer who curates incoming examples and an ops engineer who maintains monitoring and cost controls. Plan for quarterly model reviews, monthly drift audits, and incident playbooks for critical failures.

From a financial perspective, expect maintenance OPEX (monitoring, retraining, infra) to settle at 10–30% of your initial development spend annually for a mature vertical product. That range narrows when your deployment is lightweight or when you have deterministic rule-based fallbacks that reduce retraining frequency.

Frequently Asked Questions

- When should a startup prefer a domain-specific model over a general foundation model?

- Prefer a domain-specific model when you can define clear, measurable outcomes tied to revenue or cost savings, when you hold or can collect proprietary data, and when latency or privacy requirements rule out large shared models. These conditions make specialised models materially more efficient and defensible.

- How much data is “enough” to get started?

- For many vertical tasks 3,000–50,000 high-quality labelled examples will suffice to validate a hypothesis. The exact number depends on task complexity and label noise; quality often outweighs quantity, and active sampling accelerates progress.

- Can outsourcing lock you into poor economics long term?

- Yes—vendors accelerate pilots but can create dependency and recurring costs that erode margins. Use outsourcing for early validation and insist on data portability and exportable models before committing to scale.