Energy Efficiency: The New Competitive Moat in AI Scaling

The Strategic Objective

We are currently watching enterprise artificial intelligence consume capital at a staggering rate. The compute costs associated with running massive, probabilistic models have pushed many startup runways to the brink, turning what should be operational assets into cash-burning liabilities. Scaling pure neural networks is fundamentally unsustainable for the average commercial operator, creating a massive barrier to widespread enterprise adoption.

Researchers at Tufts have recently introduced a neuro-symbolic model that merges neural network pattern matching with structured symbolic reasoning. For investors and enterprise decision-makers, this translates into a staggering 100x reduction in energy usage while maintaining high accuracy. In our experience, surviving the next wave of commercial intelligence requires moving beyond parameter bloat. The objective here is simple: replace energy-hungry legacy architectures with hybrid models to ensure sustainability-driven scalability and immediate operational cost reduction.

Prerequisite Requirements

Before overhauling your existing tech stack, it is vital to establish a clear baseline of your current compute expenditure. We frequently see founders rush into architectural transitions without a granular understanding of where their cloud credits are actually going. You must audit your existing neural network infrastructure to identify the exact inference workflows that are needlessly burning computational resources.

Moving to a neuro-symbolic framework demands a distinct set of technical competencies. Your engineering team needs hybrid expertise, bridging the gap between traditional deep learning and formal logic systems. Ensure you have the following assets secured before initiating the transition.

- A comprehensive cloud computing audit detailing your most expensive inference routes.

- Engineering talent experienced in both neural networks and explicit symbolic logic programming.

- A robust testing environment to safely sandbox the hybrid model away from production traffic.

- Clearly documented business rules that can be translated into formal symbolic constraints.

Sequence of Operations

Transitioning from a pure neural architecture to a hybrid neuro-symbolic model is an exercise in surgical precision. We strongly advise against a blanket replacement of your existing intelligence systems. Instead, operators must isolate the most resource-intensive inference tasks and migrate them sequentially, preventing any disruption to the core user experience.

The following sequence provides a commercially viable path to integration. By structuring the transition into discrete, verifiable stages, you protect your product functionality while steadily bringing your compute overhead down to manageable levels.

Audit and Isolate

Begin by identifying the specific queries that require strict logical deduction rather than creative generation. Extract these queries from your primary large language model pipeline and isolate them in a dedicated staging environment for hybrid processing.

Define Symbolic Rules

Task your domain experts with writing explicit, hard-coded rules for the isolated tasks. The symbolic engine will rely on these deterministic pathways to process logic, ensuring that the system does not waste energy hallucinating answers to straightforward structural queries.

Train the Hybrid Interface

Implement the neural component strictly as a perception or translation layer. Train it to recognise incoming unstructured data and format it cleanly so the symbolic logic engine can process it without requiring massive computational power.

Shadow Deployment

Run the newly built neuro-symbolic pipeline alongside your legacy system. Compare the outputs and track the energy consumption in real-time, ensuring the logical accuracy matches or exceeds your existing model without introducing latency.

Full Routing Cutover

Once the shadow deployment validates the operational cost reductions, update your API gateway to route all logic-heavy prompts directly to the neuro-symbolic system, officially decommissioning the expensive pure-neural routes for those tasks.

Common Failure Points

Implementation is where theoretical efficiency often crashes into practical ruin. In our capacity advising scale-ups, we routinely observe teams falling into predictable traps when attempting to fuse logic frameworks with neural components. The most common error is over-complicating the symbolic ruleset, which leads to brittle systems that fail dramatically when encountering edge cases in production environments.

Another frequent misstep involves poor data pipeline hygiene during the transition phase. If your neural pattern matcher feeds noisy, unstructured outputs into the symbolic reasoner, the entire model will bottleneck, eliminating any energy savings you hoped to achieve. We implore operators to maintain strict validation boundaries between the two distinct processing layers.

Build Versus Buy Comparison

Founders must make a critical capital allocation decision: build the neuro-symbolic architecture internally or outsource the implementation to a specialised vendor. There is no universal correct answer, but the choice fundamentally alters your operational risk profile, intellectual property retention, and cash runway.

Building in-house retains full ownership and ensures deep customisation, yet it demands elite engineering talent that commands a premium salary. Outsourcing accelerates deployment and provides predictable upfront costs, though it often results in vendor lock-in and a generic logic ruleset that may not perfectly align with your bespoke business requirements.

| Factor | In-House Development | Outsourced Implementation |

|---|---|---|

| Capital Expenditure | High initial payroll overhead | Predictable milestone billing |

| Intellectual Property | Fully owned logic rulesets | Shared or licensed frameworks |

| Time to Deployment | 6 to 9 months minimum | 8 to 12 weeks |

| System Maintenance | Internal team reliance | Ongoing SLA dependencies |

| Commercial Risk | Key-person dependency | Vendor lock-in over time |

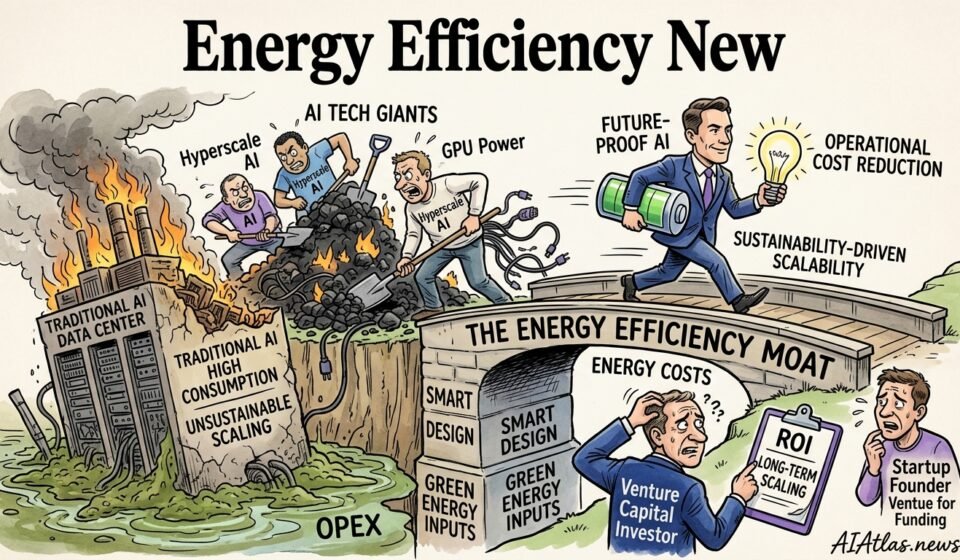

Visualised Workflow Roadmap

To grasp the commercial magnitude of the Tufts research, one must visualise the stark contrast in resource consumption. The primary advantage of a neuro-symbolic model is not merely its logical precision, but the sheer volume of compute cycles it saves by bypassing brute-force pattern matching for standard reasoning tasks.

Below, we have mapped out a direct comparison of energy consumption across a standard enterprise deployment cycle. This visual evidence should arm decision-makers with the financial justification required to pivot away from entirely neural architectures and adopt a more sustainable compute strategy.

Verification and Success Metrics

A theoretical 100x reduction in energy is entirely meaningless if your application latency spikes or your reasoning accuracy degrades. We advise implementing aggressive telemetry from day one to verify that the commercial thesis holds up in live production environments. You must track financial expenditure and technical performance metrics side-by-side.

Success in this domain is highly quantitative. We look for a measurable drop in cloud compute billing correlated directly with the routing of prompts to the symbolic engine. If your monthly cloud expenditure remains flat while your engineering complexity doubles, the hybrid implementation has failed. Prioritise monitoring your cost per query and logical error rates weekly.

The Long-Term Maintenance Plan

A neuro-symbolic system is not a set-and-forget asset. As your business context evolves, the symbolic rules governing the logic engine will inevitably require updating. We have found that companies failing to treat their symbolic parameters as living code quickly end up with an obsolete intelligence layer that rejects valid user inputs and frustrates customers.

To maintain operational cost reductions indefinitely, institute a quarterly review of your logic frameworks. Your engineering leaders must consistently prune redundant rules, expand the symbolic dictionary, and fine-tune the neural interface. This deliberate, ongoing maintenance prevents the model from degrading over time and ensures your business remains structurally profitable.

Frequently Asked Questions

- How does a neuro-symbolic model differ from a standard large language model?

- Standard models rely entirely on probabilistic pattern matching, requiring vast compute power to guess the next logical step. Neuro-symbolic models delegate strict logical tasks to a hard-coded symbolic engine, drastically reducing the computational load.

- Can this approach integrate safely with our existing enterprise architecture?

- Yes, it typically operates as an intelligent routing layer positioned above your current systems. You can implement it iteratively, shifting high-cost inference tasks to the neuro-symbolic engine while leaving legacy databases securely intact.

- Is the 100x energy reduction realistically achievable in standard commercial settings?

- The Tufts research demonstrates this metric in controlled reasoning benchmarks, but actual enterprise results depend heavily on proper workflow routing. Companies dealing with heavy compliance, logic verification, and structured data queries will see the sharpest drop in operational costs.